|

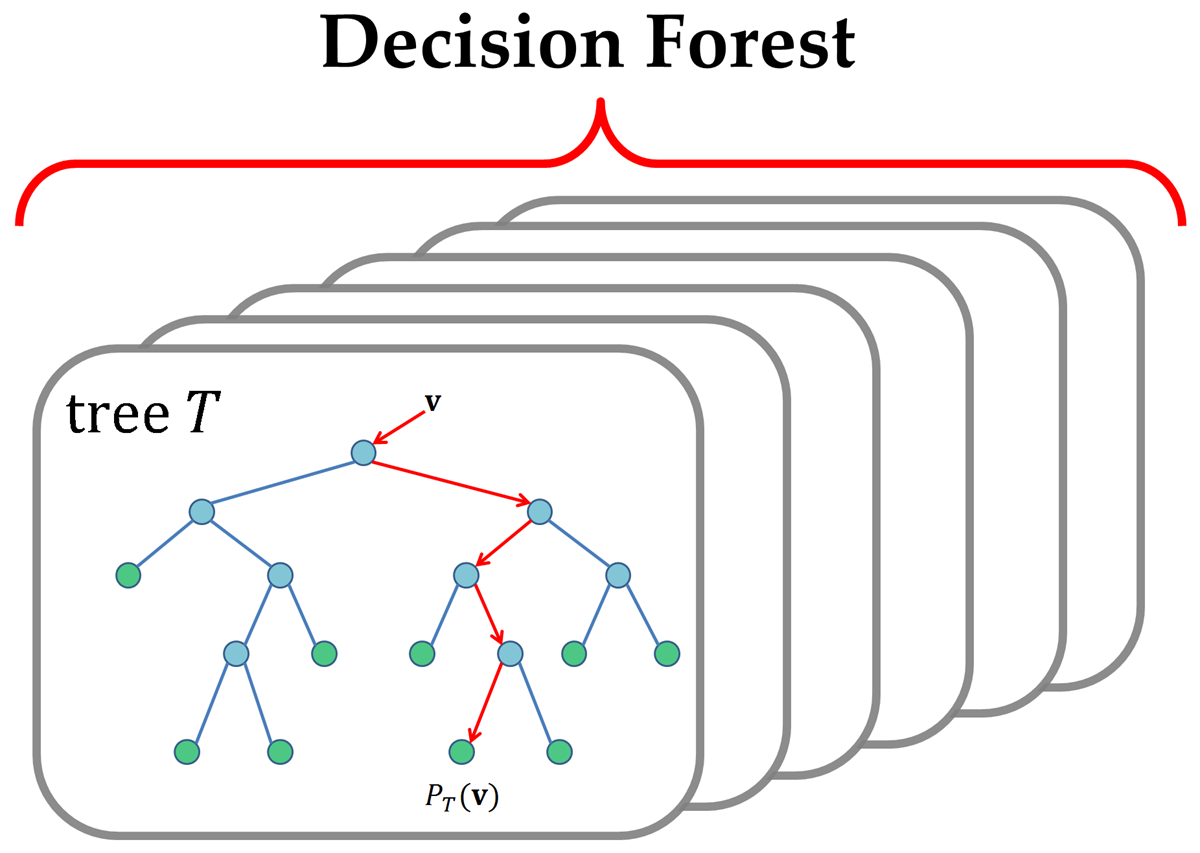

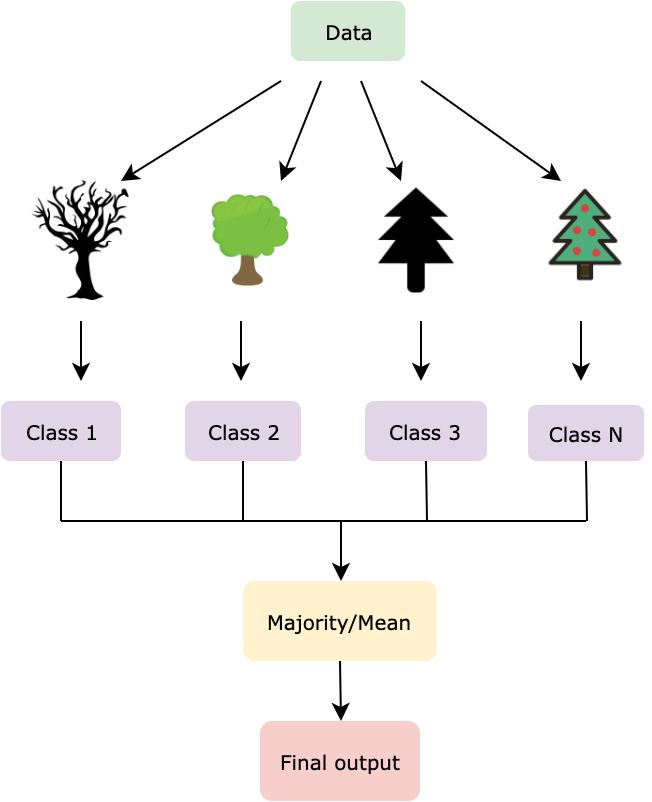

3/12/2023 0 Comments Random forest Import tensorflow_decision_forests as tfdfĬlasses = dataset_df. These feature classes are supported natively by TF-DF, and no pre-processing is required. There are numerical (e.g., bill depth mm), categorical (e.g., island), and missing characteristics in the dataset. There are eight variables in this dataset (n = 344 penguins). We train, assess, analyze, and export a binary classification, Random Forest, on the Palmer’s Penguins dataset. Let’s see the steps and the dataset used. It improves the model’s accuracy and eliminates the problem of overfitting. It can handle huge datasets with a lot of dimensionalities. Random Forest can handle both classification and regression problems. It randomly samples elements rather than looking greedily for the best predictors to generate branches. The random forest method offers more randomness and diversity by using the bagging approach to the feature space. It is based on the concept of bagging, which involves merging the results of many Decision trees on distinct samples of the data set to reduce variation in predictions. This library can bridge the extensive TensorFlow ecosystem by making it simple to connect tree-based models with numerous TensorFlow tools, libraries, and platforms like TFX. Tf_dataset = _dataframe_to_tf_dataset(dataset, label="my_label") Import tensorflow_decision_forests as tfdsĭataset = pd.read_csv("demo/dataset.csv") The example for the algorithm is shown as

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed